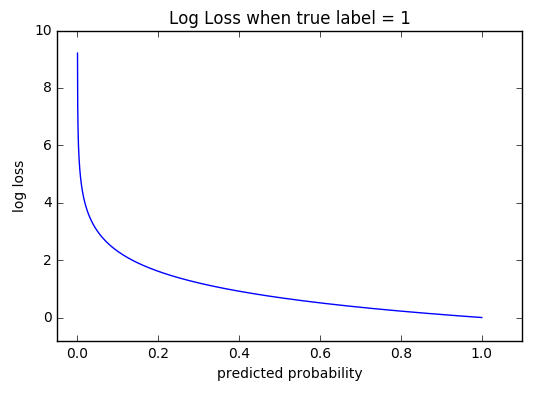

For this reason, the probability of picking one shape and/or not picking another is more certain.Ĭontainer 2: Probability of picking the a triangular shape is 14/30 and 16/30 otherwise. The greater the value of entropy, H(x), the greater the uncertainty for probability distribution and the smaller the value the less the uncertainty.Ĭonsider the following 3 “containers” with shapes: triangles and circlesģ containers with triangle and circle shapes.Ĭontainer 1: The probability of picking a triangle is 26/30 and the probability of picking a circle is 4/30. For x values between 0 and 1, log(x) <0 (is negative). p(x) is a probability distribution and therefore the values must range between 0 and 1.Ī plot of log(x). Reason for negative sign: log(p(x))<0 for all p(x) in (0,1). EntropyĮntropy of a random variable X is the level of uncertainty inherent in the variables possible outcome.įor p(x) - probability distribution and a random variable X, entropy is defined as followsĮquation 1: Definition of Entropy. Before diving into Cross-Entropy cost function, let us introduce entropy. The concept of cross-entropy traces back into the field of Information Theory where Claude Shannon introduced the concept of entropy in 1948. The process of adjusting the weights is what defines model training and as the model keeps training and the loss is getting minimized, we say that the model is learning. During model training, the model weights are iteratively adjusted accordingly with the aim of minimizing the Cross-Entropy loss. The objective is to make the model output be as close as possible to the desired output (truth values). The purpose of the Cross-Entropy is to take the output probabilities (P) and measure the distance from the truth values (as shown in Figure below).įor the example above the desired output is for the class dog but the model outputs. In the above Figure, Softmax converts logits into probabilities. Input image source: Photo by Victor Grabarczyk on Unsplash. I have put up another article below to cover this prerequisite.Ĭonsider a 4-class classification task where an image is classified as either a dog, cat, horse or cheetah. The understanding of Cross-Entropy is pegged on understanding of Softmax activation function. It is used to optimize classification models. Cross-Entropy loss is a most important cost function. The objective is almost always to minimize the loss function. When working on a Machine Learning or a Deep Learning Problem, loss/cost functions are used to optimize the model during training.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed